Decision makers in a tight spot

The pressure on decision makers to lead their financial institutions to a path of sustainable economic stability is steadily increasing. This requirement results on the one hand by the supervisory authority demanding more and more information on financial stability that quite often has to be delivered ad hoc. At the same time, shareholders expect that their return will be achieved even in economically difficult times. Multi-annual scenario analyses build the basis for banks to prove sustainable financial stability and have to be conducted across all departments—Risk, Accounting, Controlling and Regulatory Reporting—in a consolidated form and often ad hoc. Ideally a consistent data warehouse and aligned coherent IT processes, which are currently set up and further developed in the financial institutions due to the above mentioned regulatory requirements, serve as a basis. All relevant financial data of the decentralized operational systems result in a harmonized picture.

Major financial efforts have been taken in the past years to fulfill the BCBS #239 minimum requirements. Thus, it would be advantageous to be able to calculate and illustrate other simulation scenarios as well. The investments could pay off even faster by means of further functionalities. This approach is discussed in this proposed solution.

Business and technical challenge

First, business challenges have to be overcome to achieve a scenario-capable IT architecture which then are to be integrated into the IT architecture in a second step. The business challenges comprise the identification of sensitivities of external factors (e.g. macroeconomic variables) on the single value driver tree components of KPIs. Then it has to be considered to what extent the modeling approaches of single value components have to be elaborated. A large part of “personnel costs” will remain quite stable in the future if no explicit adjustment measures are planned. A rather rudimentary simulation approach suffices for such components. However, the net interest income decisive for the financial performance of the bank should be deemed more relevant for the elaboration of the modeling approach.

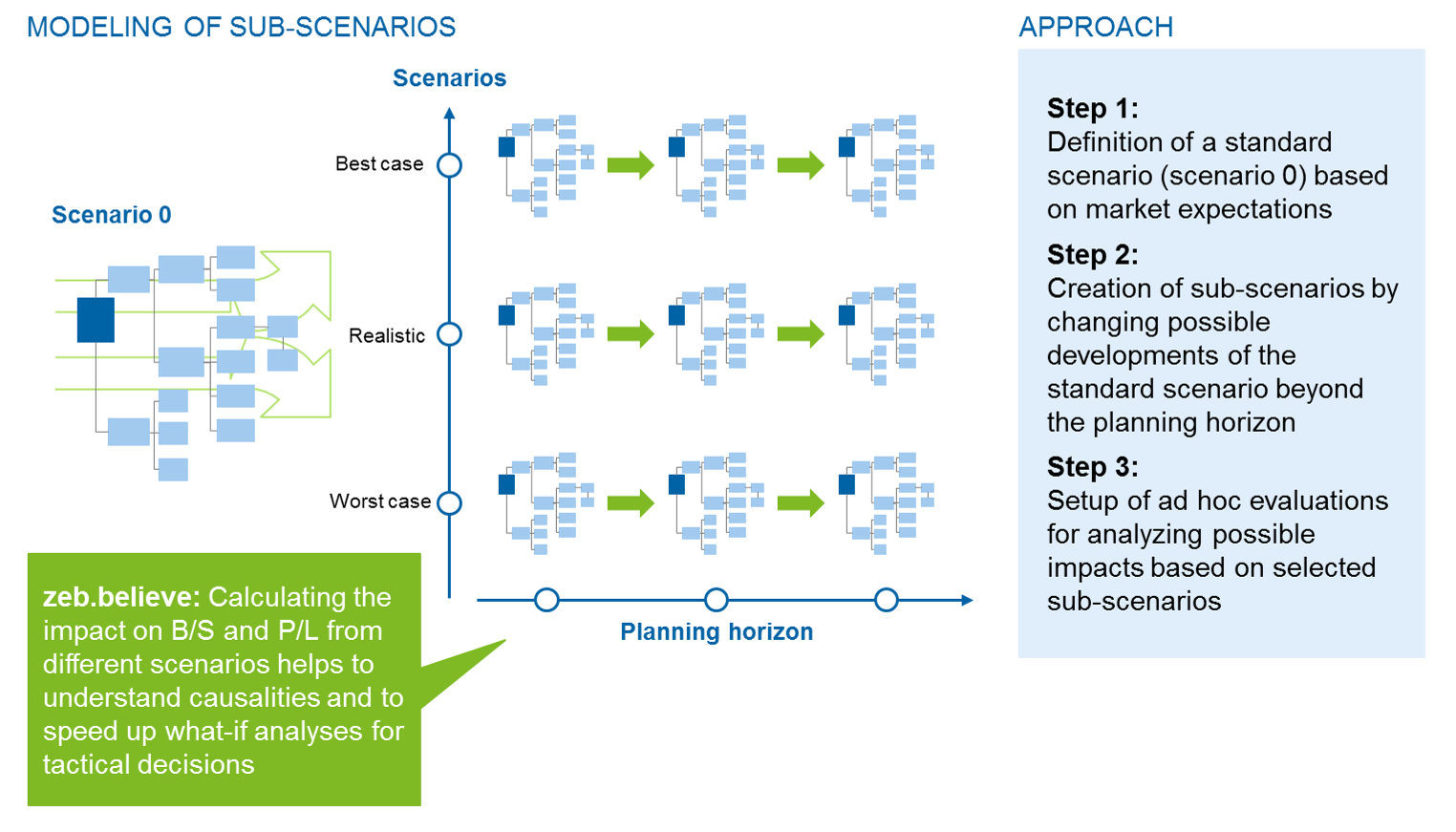

The business composition of the scenarios follows a vertical and horizontal logic in this proposal. Horizontal scenario creation means within this context a forward projection of the input parameters beyond the planning periods, whereas the vertical scenario creation describes a plausible and logical conclusion of different input parameters against the backdrop of general market expectations. The horizontal and vertical scenario creation is illustrated in Figure 1.

In addition, it is required to access the sub-results via a drill down within the scenarios due to cause analyses, based on a consolidated simulation across all pillars.

Enhancing the IT architecture for scenario capability

In the next step, the business requirements have to be integrated into the existing IT architecture.

BCBS #239-compliant IT infrastructures that have been established during the past years have allowed banks to significantly improve their risk aggregation. The five major improvements are:

- standardization and reconciliability of data models

- harmonized data quality processes

- comprehensibility through aggregation and data lineage concepts

- calculation engines for business issues

- common management reporting

Thus, it has to be assessed how the IT infrastructures can be further enhanced for scenario capability in order to benefit from the investments made and to not “dilute” the centralization due to alternative systems.

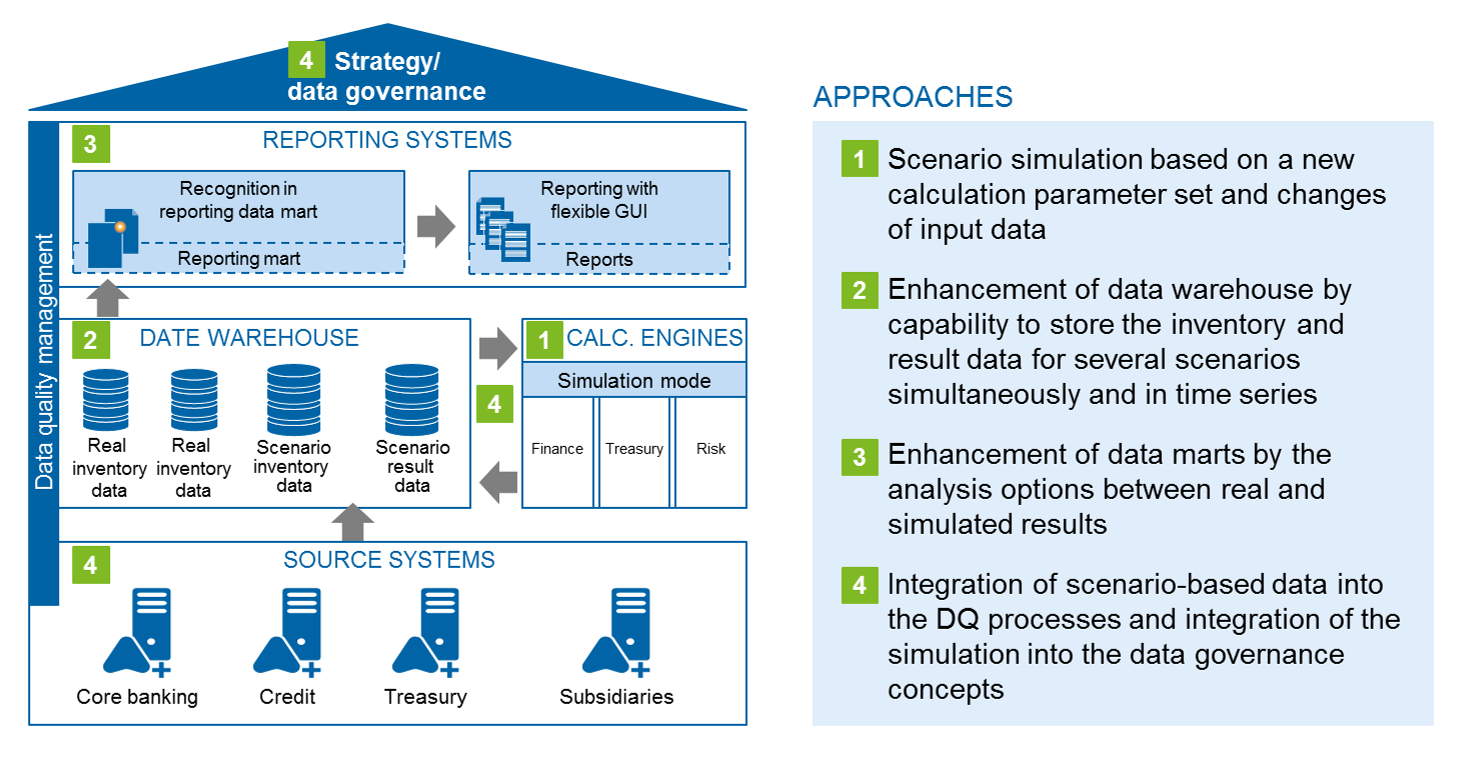

Figure 2 illustrates a schematic overall view of the IT infrastructure and represents the four main approaches.

1 Calculation engines

The existing calculation engines are designed to capture and aggregate an as-is image. They combine the raw data—primarily master and transaction data—to economic KPIs. In risk assessment especially defined sets of calculation parameters are used that can also contain forecasts (e.g. market data and ratings).

This approach maintains the existing calculation engines in their individual form and functioning as far as possible. Only the basis parameterization and data supply processes are changed in order to calculate alternative scenarios, too.

A Finance, Treasury or Risk calculation engine e.g. generally needs both business and market data as well as risk factors as a basis. The same algorithms and jobs are used to produce simulation results by means of the newly delivered scenario data.

It is important to take care at this point that the parameterization and data connection (of scenario data) are conducted in an efficient and comprehensible way and that the calculation engines themselves should work even without permanent data storage. Thus, particular challenges of process synchronization in case of several independent scenarios can be overcome.

2 Data warehouse

The central adjustments and challenges are inherent in the data architecture. In order to achieve the best possible reusability degree of the existing architecture, the simulation data should be directly integrated into the existing data warehouse. According to this proposal, all data is to be assigned to a scenario, if possible. As a consequence, the as-is data basis of the scenarios becomes “reality” and is thereby identified. In addition, further scenarios exist to maintain the alternative data. Each data set thus has an own “scenario” dimension. This holds true for both the basic as well as result data and does not mean that the actual data inventory per scenario has to be duplicated. Only the change data is generated and stored for a new scenario by means of delta scenarios.

Independent sub-scenarios that are only valid for certain areas (e.g. market data) can be combined with other scenarios in the following stages. Thus, a deductive variety of input parameters can be provided. Special departments of the bank (e.g. group development and national economy) make their work results in individual scenarios available for the total bank.

For ensuring the repeatability and synchronization, the data warehouse has to be enhanced by a key system with resulting increasing requirements for the aligned processes.

3 Reporting systems

The enhancements of the existing management reporting only amount to little efforts, but are nevertheless vital for the acceptance of the solution. As-is data sets and simulation results have to be clearly separated in the existing reporting marts. However, it has to be possible to combine and connect them again for efficient storage, if needed. The same requirements hold true for regulatory reporting. It has to be enhanced for a detailed illustration of the composition of management-relevant parameters by the analysis options between real and simulated results and/or by the required addition drill-down functionalities.

4 Non-functional requirements and central cross-divisional functions

The non-functional requirements and methods (e.g. data quality management, data governance, etc.) are indispensable for the sustainable functionality and acceptance of the data warehouse within the group. Therefore, they have to be considered during the enhancement by simulation results and scenarios.

The existing cross-divisional systems for e.g. data storage, archiving, user and rights administration can be usually used further without being modified. This leads to increased acceptance of existing systems and to a cost-efficient operation of the IT architecture.

Conclusion

It is revealed that the requirement for “scenario capability” is becoming ever more important and can be consistently integrated into an existing data warehouse while adapting central and decentralized components. The outlined enhancements are built on the basic framework of the IT architecture modernized as part of the BCBS #239 implementation and leverage the investments made.

The implementation of the enhancements entails several challenges. However, there is also the opportunity to initiate the transformation of the areas prioritized by the bank in an evolutionary and successive way. An as-is analysis to be conducted individually and the comparison with the target images to be developed is indispensable.

Overall, the described approach aims at promoting cost-efficient processes of high quality and at reducing manual workarounds. More complex illustrations, e.g. during the derivation of calculation parameters from macroeconomic influencing factors or during the illustration of alternative business developments in the existing scenarios, are possible and sound, depending on the business policies. Nevertheless, this drafted solution should serve as a first idea that has to be adapted to the individual needs of the credit institution.